File size: 2,941 Bytes

8b6af4e 40168bb 8b6af4e 40168bb 8b6af4e 40168bb 8b6af4e 40168bb 8b6af4e 40168bb 8b6af4e 40168bb 8b6af4e 40168bb 8b6af4e 40168bb 8b6af4e 40168bb 8b6af4e 40168bb 8b6af4e 40168bb 8b6af4e 40168bb 8b6af4e | 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 | ---

license: bsd-3-clause

library_name: braindecode

pipeline_tag: feature-extraction

tags:

- eeg

- biosignal

- pytorch

- neuroscience

- braindecode

- convolutional

---

# IFNet

IFNetV2 from Wang J et al (2023) [ifnet].

> **Architecture-only repository.** Documents the

> `braindecode.models.IFNet` class. **No pretrained weights are

> distributed here.** Instantiate the model and train it on your own

> data.

## Quick start

```bash

pip install braindecode

```

```python

from braindecode.models import IFNet

model = IFNet(

n_chans=22,

sfreq=250,

input_window_seconds=4.0,

n_outputs=4,

)

```

The signal-shape arguments above are illustrative defaults — adjust to

match your recording.

## Documentation

- Full API reference: <https://braindecode.org/stable/generated/braindecode.models.IFNet.html>

- Interactive browser (live instantiation, parameter counts):

<https://huggingface.co/spaces/braindecode/model-explorer>

- Source on GitHub: <https://github.com/braindecode/braindecode/blob/master/braindecode/models/ifnet.py#L31>

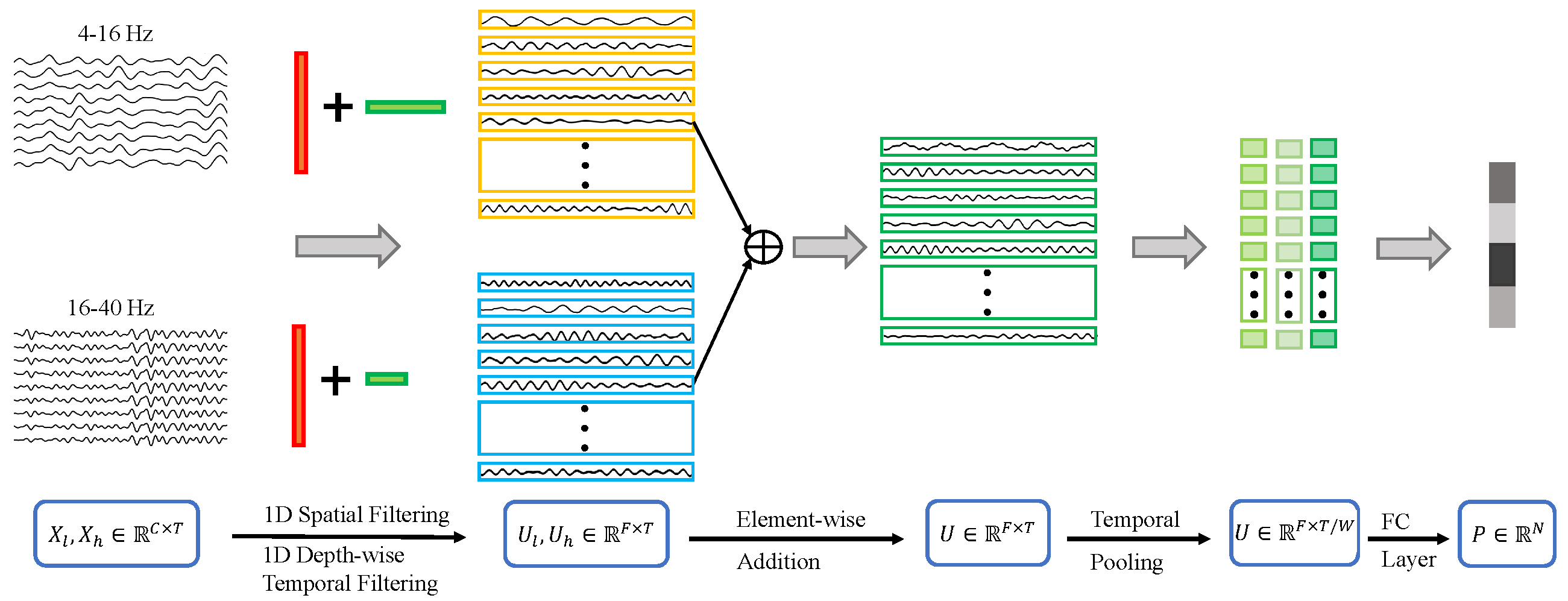

## Architecture

## Parameters

| Parameter | Type | Description |

|---|---|---|

| `bands` | list[tuple[int, int]] or int or None, default=[[4, 16], (16, 40)] | Frequency bands for filtering. |

| `out_planes` | int, default=64 | Number of output feature dimensions. |

| `kernel_sizes` | tuple of int, default=(63, 31) | List of kernel sizes for temporal convolutions. |

| `patch_size` | int, default=125 | Size of the patches for temporal segmentation. |

| `drop_prob` | float, default=0.5 | Dropout probability. |

| `activation` | nn.Module, default=nn.GELU | Activation function after the InterFrequency Layer. |

| `verbose` | bool, default=False | Verbose to control the filtering layer |

| `filter_parameters` | dict, default={} | Additional parameters for the filter bank layer. |

## References

1. Wang, J., Yao, L., & Wang, Y. (2023). IFNet: An interactive frequency convolutional neural network for enhancing motor imagery decoding from EEG. IEEE Transactions on Neural Systems and Rehabilitation Engineering, 31, 1900-1911.

2. Wang, J., Yao, L., & Wang, Y. (2023). IFNet: An interactive frequency convolutional neural network for enhancing motor imagery decoding from EEG. https://github.com/Jiaheng-Wang/IFNet

## Citation

Cite the original architecture paper (see *References* above) and braindecode:

```bibtex

@article{aristimunha2025braindecode,

title = {Braindecode: a deep learning library for raw electrophysiological data},

author = {Aristimunha, Bruno and others},

journal = {Zenodo},

year = {2025},

doi = {10.5281/zenodo.17699192},

}

```

## License

BSD-3-Clause for the model code (matching braindecode).

Pretraining-derived weights, if you fine-tune from a checkpoint,

inherit the licence of that checkpoint and its training corpus.

|